E-scooter fleet management. Scaling without breaking operations

A shared mobility operator started running into scaling problems as its e-scooter fleet expanded across multiple cities. Payment workflows, compliance monitoring, security alerts, and maintenance automations gradually became harder to manage inside a single operational flow. Every new automation solved one problem while making the overall system more difficult to maintain, troubleshoot, and scale.

This case study explores how the operator reorganized its automations, separated operational domains, and changed its approach to e-scooter fleet management as the business continued growing.

Why scaling e-scooter fleet management becomes operationally difficult

For shared e-scooter fleets, even small operational delays become visible immediately. Riders expect scooters to unlock almost instantly after payment confirmation. When that doesn’t happen, trips get abandoned, support tickets pile up, and operators start losing revenue before the ride even begins.

But pay-to-start issues are only one part of the pressure shared mobility operators deal with daily. Theft, tampering, unauthorized movement, speed-limit compliance, battery management, and city-specific regulations all add their own layer of operational chaos. Every new city comes with different reporting requirements. Every new workflow introduces more alerts, more dependencies, and more ways for unrelated systems to suddenly interfere with each other.

Managing a 200-unit e-scooter fleet, while not being a simple operational task, still looks pretty manageable.

Scaling to 2,000 scooters spread across multiple cities, teams, and operational rules starts feeling like trying to coordinate several overlapping systems while incidents, alerts, and workflow updates keep catching fire around you.

“This Is Fine” © KC Green

“This Is Fine” © KC Green

That was exactly the situation one shared mobility operator encountered as its fleet expanded. Payment workflows, compliance monitoring, security alerts, and maintenance automations gradually became difficult to manage inside a single operational flow.

But let’s break it down.

Case study: automating shared e-scooter operations

A European shared mobility operator first approached the problem from a rider experience perspective.

The company was dealing with growing frustration around ride activation delays. Riders would complete payment in the app, stand next to the scooter waiting for it to unlock, and sometimes abandon the ride entirely if the process took too long.

At smaller fleet sizes, these incidents were manageable manually. But as the fleet expanded across multiple cities, support tickets related to delayed unlocks started increasing alongside rider complaints and operational overhead.

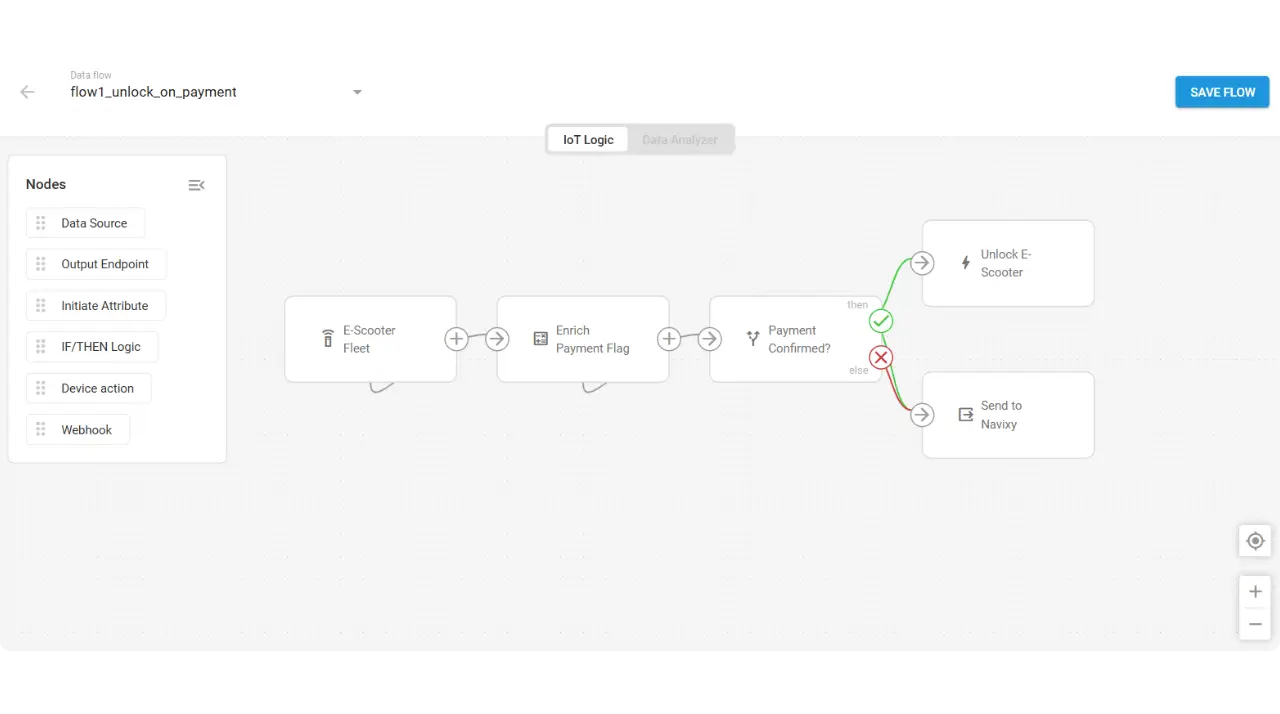

The operator decided to automate the payment-to-start process using IoT Logic.

Note: IoT Logic is a low-code environment for telematics and IoT automation. Workflows that previously required custom backend development and integrations can be configured visually in under an hour, depending on complexity.

The initial flow listened for a payment confirmation flag inside the incoming telemetry stream. Once the platform detected that the custom payment attribute had changed to a confirmed state, the flow triggered an automated M2M GPRS command that remotely released the scooter lock. At the same time, the telemetry continued forwarding into Navixy for monitoring and tracking purposes.

The automation itself was relatively simple. Payment confirmation in, unlock command out.

Ride-start latency dropped. Unlock reliability improved. Rider complaints decreased.

More importantly, the operator discovered how quickly operational automations could improve fleet responsiveness without requiring deeply coupled integrations or additional backend development.

Expansion into compliance and security workflows

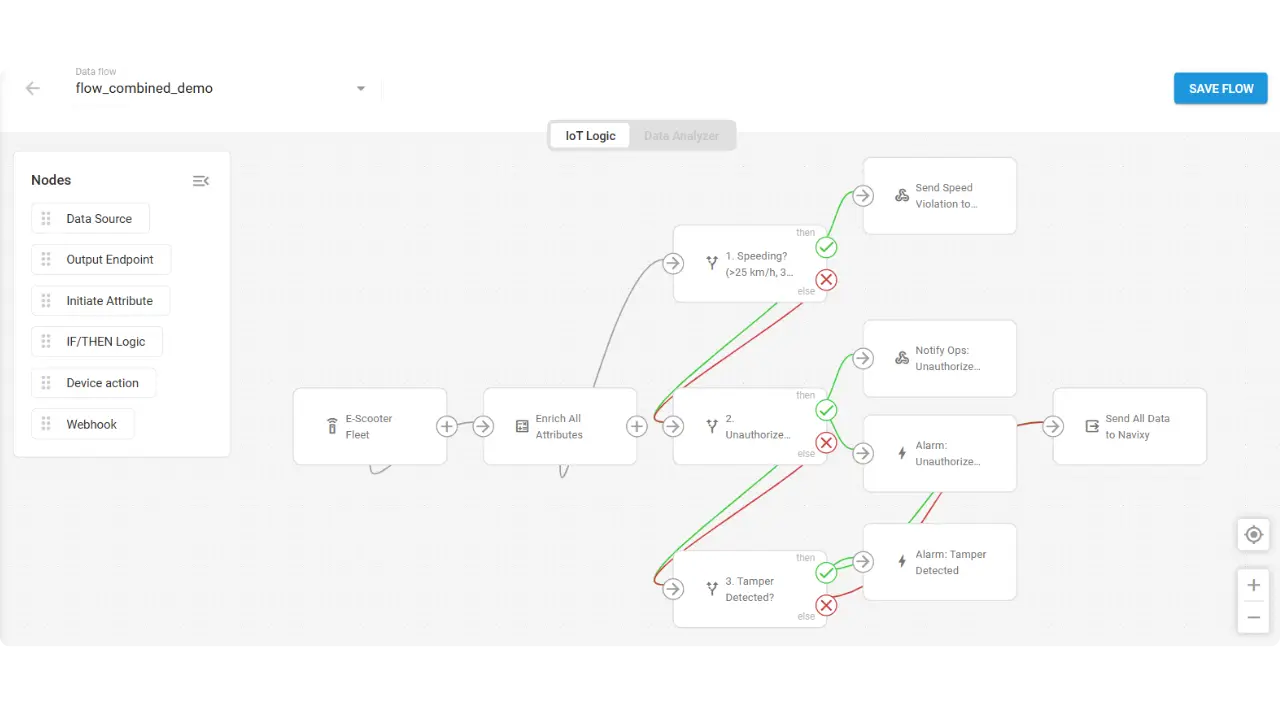

After automating the payment-to-start process, the operator started analyzing other operational bottlenecks and repetitive processes that could be handled automatically as the fleet continued growing.

Speed compliance was driven by municipal requirements. Cities across Europe and the United States have adopted 25 km/h speed limits for shared e-scooters, and many require operators to report sustained violations. The operator needed a way to detect when a rider exceeded the limit across consecutive telemetry messages, filtering out GPS spikes, and create an incident record with coordinates, timestamp, and device ID.

A webhook node could push that incident to their CRM, creating a compliance record without manual intervention.

Unauthorized movement detection addressed a different operational pressure: scooters moving when no active rental session existed. This pattern often indicated theft or tampering. The operator wanted to trigger an alert to operations, attach GPS coordinates, and remotely activate the scooter's onboard alarm.

Tamper detection went further. Case opening, tracker detachment, and abnormal vibration patterns all suggested someone was trying to disable or steal the scooter. These events needed to activate the alarm immediately, forward position data to Navixy, and create a security incident for the response team.

Each automation addressed a real operational pain. Each automation was initially added to the existing flow.

Why the original set up stopped working

As often happens, this kind of scaling did not come without complications.

This time, the reason was an architectural limitation within IoT Logic. A device used as a data source could only belong to one flow at a time. Using it in a new automation automatically removed it from the previous one.

The workaround seemed reasonable at first. The operator combined payment logic, speed compliance, security alerts, and tamper detection into one large flow handling everything at once.

Technically, it worked. However, it became difficult to maintain very quickly.

Every new automation made the flow more fragile. Troubleshooting started taking longer because payment, compliance, security, and maintenance logic were all interconnected. Updating one part of the flow meant risking unrelated processes across the entire fleet.

The system that aimed to simplify operations seemed to gradually become another operational bottleneck itself.

Transition toward modular operational automations

The turning point came when the platform enabled the same device to participate in multiple independent IoT Logic flows simultaneously. What had previously required one oversized operational workflow could now be separated into dedicated operational domains without duplicating telemetry streams or coupling unrelated automations.

The operator rebuilt their automations as four separate flows:

- Payment flow: payment confirmation triggers unlock command

- Compliance flow: sustained speeding triggers CRM incident

- Security flow: unauthorized movement triggers alert and alarm

- Tamper flow: case opening or detachment triggers alarm and position forwarding

Each flow received the same device telemetry. Each flow operated independently. Modifying the compliance logic had no effect on the payment logic. Testing the security flow didn't require testing the tamper flow.

Let’s break them down real quick.

Flow 1: Scooter unlock after payment

Trigger: Payment confirmation event appears in the telemetry stream, indicating the rider has completed their transaction.

Action: IoT Logic sends a remote unlock command via GPRS. The scooter's output control activates, releasing the physical lock. The ride session begins.

Purpose: Reduce ride-start friction. Riders expect immediate response after payment. Delays create frustration and abandonment.

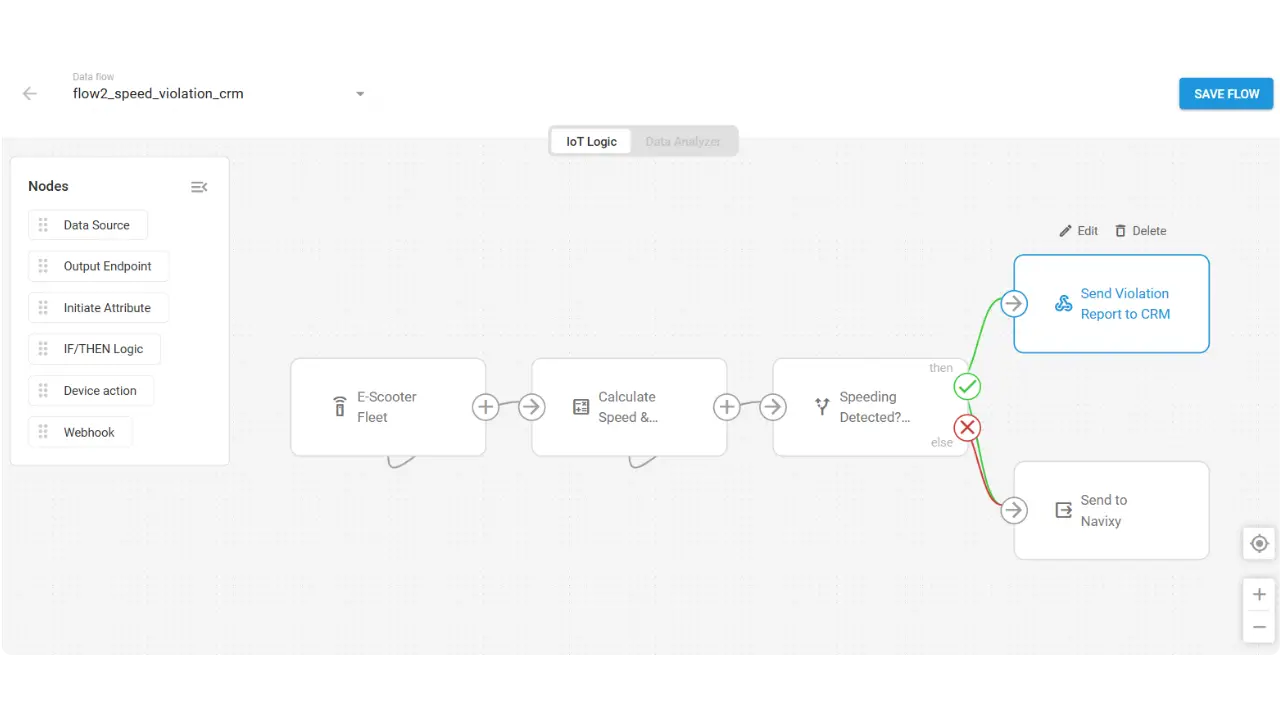

Flow 2: Speed limit violation reporting

Trigger: Speed exceeds 25 km/h across consecutive telemetry messages. The consecutive-message requirement filters GPS spikes and brief accelerations, catching only sustained violations.

Action: A webhook creates a CRM incident containing coordinates, timestamp, speed reading, and device ID. The compliance team receives a record ready for municipal reporting.

Purpose: Municipal compliance and rider accountability. Many cities require operators to document and address speed violations as a condition of their operating permits.

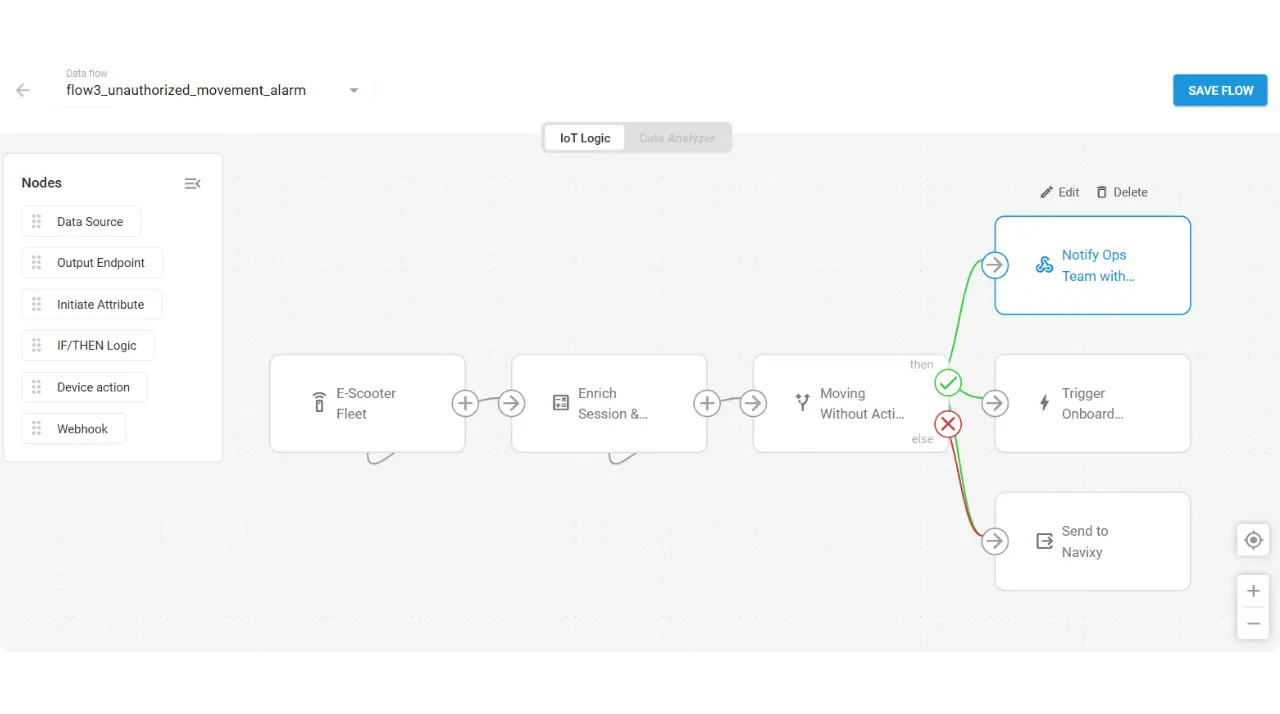

Flow 3: Unauthorized movement detection

Trigger: The scooter reports movement while no active paid session exists. This pattern suggests the scooter is being moved without authorization.

Action: A webhook alerts the operations team with GPS coordinates. The flow simultaneously sends a remote command to activate the scooter's onboard alarm. Telemetry continues forwarding to Navixy for tracking.

Purpose: Reduce theft response time and improve asset recovery. The alarm draws attention. The coordinates enable dispatch. The telemetry provides a trail.

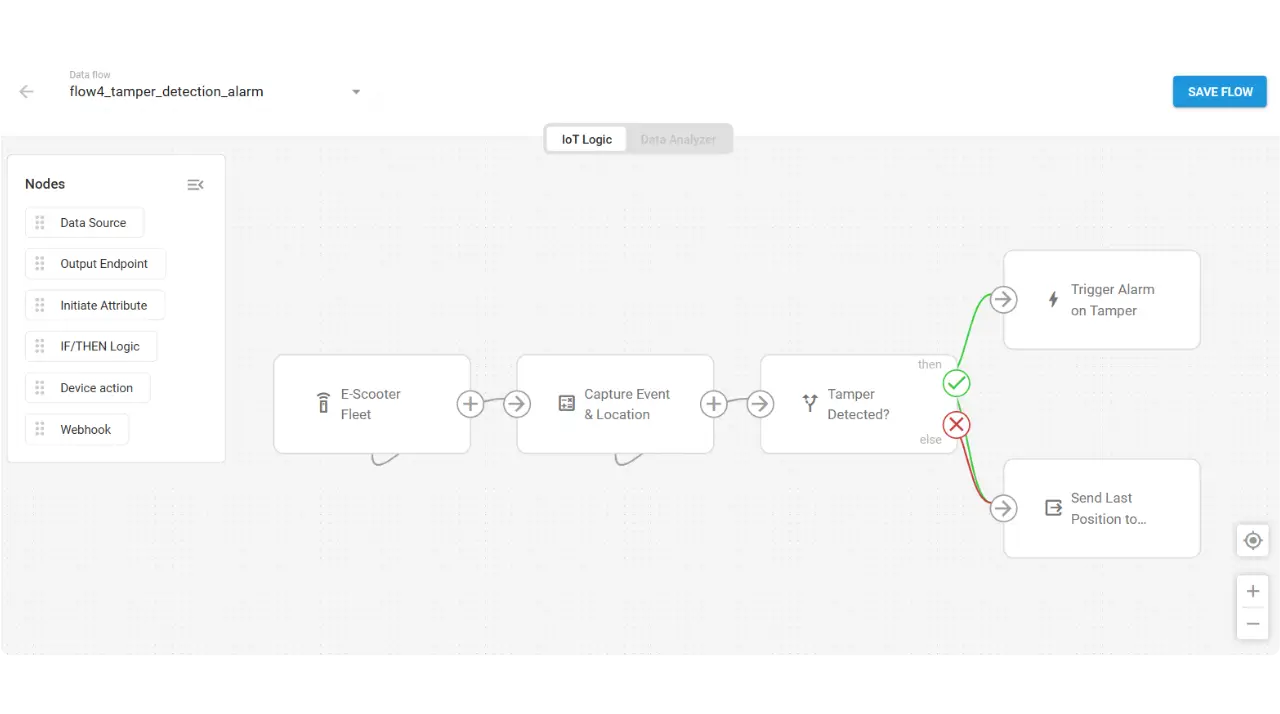

Flow 4: Tamper detection

Trigger: The scooter reports case opening, tracker detachment, or abnormal vibration activity. These signals indicate physical tampering.

Action: The onboard alarm activates immediately via remote command. Position data forwards to Navixy. A security incident event goes to the security operations center.

Purpose: Reduce tamper-related downtime and strengthen anti-theft protection. Immediate alarm activation discourages continued tampering. Position forwarding enables recovery. Strategic outcomes for shared mobility operators

Separating automations into independent flows gave the operator significantly more flexibility as the fleet continued growing. Instead of constantly reworking one oversized system, teams could scale and adapt workflows independently.

Key operational outcomes included:

- Faster expansion into new cities Compliance workflows could be adjusted to local regulations without redesigning payment, security, or maintenance automations.

- Lower operational risk Problems inside one workflow no longer affected unrelated systems across the fleet.

- Simpler workflow ownership Compliance, security, and operations teams could manage and update their own automations independently.

- Safer experimentation with new automations New workflows could be tested and deployed gradually without making the entire system harder to maintain.

Scaling fleet operations without scaling operational chaos

For shared mobility operators, scaling a fleet eventually stops being just a hardware or logistics problem. It becomes a workflow-management problem. The more cities, compliance requirements, automations, and operational teams a fleet adds, the harder it becomes to keep unrelated systems from interfering with each other.

In this case, separating automations into independent IoT Logic flows gave the operator a way to keep scaling without turning the automation layer itself into another operational bottleneck. And as shared mobility operations continue growing more complex, that kind of flexibility starts becoming just as important as the fleet itself.

If you’ve been limiting your automations because devices could only belong to one flow at a time, now might be the right moment to revisit how your workflows are structured. Book a demo to discuss your automations and explore how they could be handled with IoT Logic.